I don’t take my blog very seriously. It’s a place where I leave myself reminders of things I figured out how to do, or share things I’ve done that won’t fit in a tweet Bluesky post. But even so, I get annoyed that my blog has often been offline.

My site’s host provider has a monthly bandwidth limit. When I hit that limit, my site is taken offline, replaced with an error page saying that I’ve exceeded my quota.

I’ll write a blog post that includes images, and if too many people look at the page too many times, the whole site goes offline for the rest of the month. (To be fair, it’s never a single post that does that – I don’t get that many hits! More often it’s when I’ve written a few posts in a month, and the last one pushes me over the line). Normally I end up offline for just a day a two, but it has been over a week before.

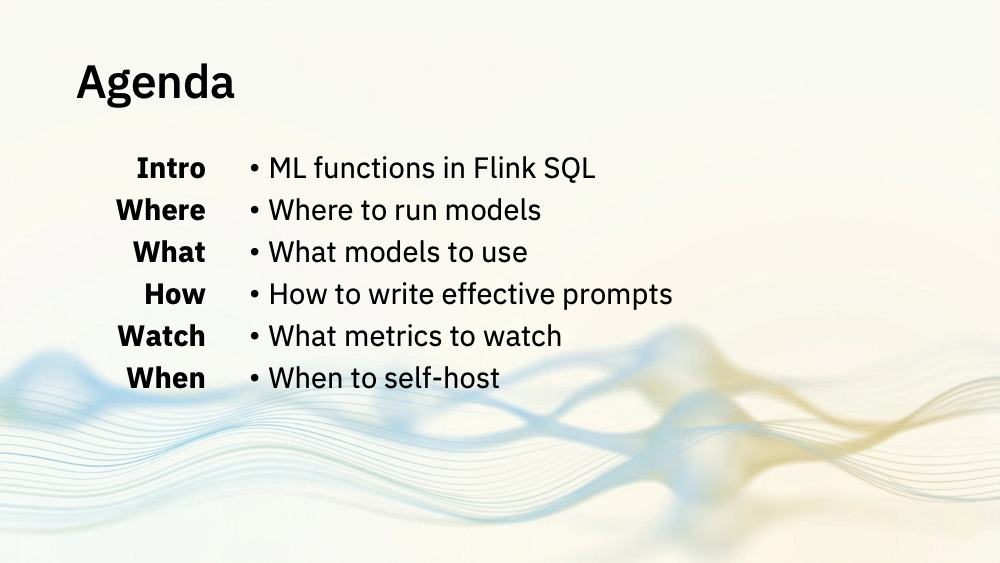

Last month, I finally decided to do something about it. I started looking at moving the images I use in blog posts somewhere else that wouldn’t count against my bandwidth limit. My blog isn’t serious enough for me to be willing to spend a lot on it, but I don’t mind paying something to make the worry about image bandwidth go away.

I searched for image hosting services, and started reading about services such as postimages.org ($14.99 a month), imgbb.com ($12.99 a month), sirv ($19 a month), and imagekit.io ($9 a month). Every service I found felt too limited, too expensive, or both.

Read the rest of this entry »