On Tuesday, a couple of dozen children (aged 8-14) spent the afternoon at Hursley so I could give them an intro to machine learning using some of the activities I’ve written for machinelearningforkids.co.uk.

I think it went pretty well, so I thought it’d be good to share what we did.

This was what the room looked like before the kids arrived… with just my two kids helping me set up. It all got a lot busier after this!

The general approach was letting them all work at computers, guided by a worksheet to build something that illustrated an aspect of machine learning. And then following this with a group discussion to draw out what they observed and what it meant.

We did this all together for the first couple of activities. Because of the large age range in the group, after this I let them split up and tackle different activities at different speeds, and followed this up by discussing their projects with them in smaller groups.

As not all the kids got to try all the activities after the first two, I also got some groups to present back their finished projects to the rest of the kids at the end. This way they all got to see all the activities, even if they didn’t all get to make all of them.

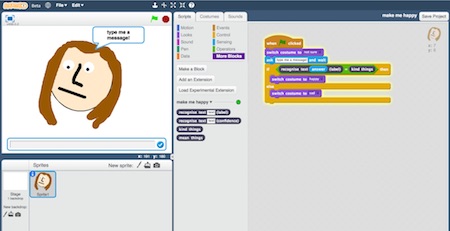

Activity: “Make me happy”

In this activity, they made a character in Scratch that reacts to compliments (by smiling) and insults (by crying). They had to write examples of compliments and insults, and use these to train a classifier.

There were a lot of ideas introduced in this activity that we’d revisit throughout the afternoon: text classification, supervised learning, what is involved in manually labelling training data, and how training a machine learning system can be quicker and easier than trying to specify rules to do the same thing.

For this first activity, they were also introduced to the idea of computers doing sentiment analysis, and recognising emotional tone in writing.

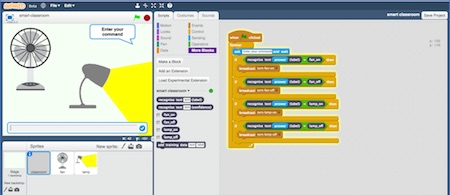

Activity: “Smart Classroom”

In this activity, they made a virtual assistant (something like an Amazon Alexa or Google Home) in Scratch. The aim was to be able to control virtual devices by giving commands to their virtual assistant.

They again had to train a classifier with examples of the different commands they wanted their devices to understand. But this time, this was text classification to recognise the intent rather than the sentiment.

This activity also introduced the idea of confidence thresholds. They got their virtual assistants to handle off-topic commands that had nothing to do with their devices by properly saying “I’m sorry, I don’t understand”.

We looked at some of the Skills in Amazon’s Alexa Skills store, and talked about how they would’ve trained it if they had to create it themselves. The takeaway, a surprise to some of them, was that they had now tried building using the basic ML techniques behind a lot of the Skills in the store. This was particularly powerful for the kids who’d used Alexas at home before. Something that just worked like magic before was now something that they knew they could do themselves.

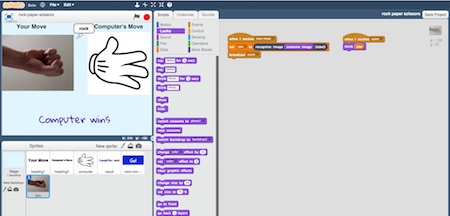

Activity: “Rock, Paper, Scissors”

In this activity, they made the game Rock, Paper, Scissors in Scratch, using a webcam to take a picture of their hand to let them play against the computer.

The previous activities showed how machines could be trained to recognise text (either the meaning of it, or the emotional tone of it). In this activity, we extended this to show that computers can be trained to do image recognition. They had to train an image classifier by taking photos of their hands in different shapes.

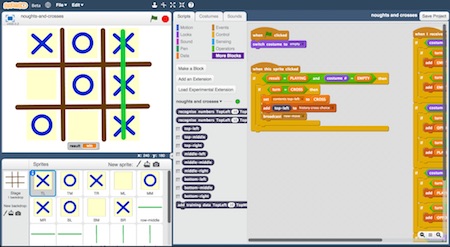

Activity: “Noughts & Crosses”

In this activity, they taught a machine learning system to play noughts and crosses. Starting from a simple noughts and crosses game in Scratch, they had to modify it to collect training data they’d use to train their decision tree classifier.

Instead of manually writing examples to train the computer, this was collecting training data by playing a game. The game worked from the start, albeit making terrible decisions in every move. But it learned from each game so that it got better and better the more games they played. This was a chance to introduce the idea of reinforcement learning, as an alternative to manually labelling training data.

The results were mixed, mostly because of the limited time they had to train their systems. But a couple of the groups ended up with a Scratch game that actually played a decent game of noughts and crosses – not winning every game, but winning quite a few!

Apart from being a chance to talk about AI in games, this activity also let me introduce a little history: I showed a picture of “MENACE” (the Machine Educable Noughts And Crosses Engine) created by Donald Michie in 1960, and explained how what he’d implemented using glass beads and matchboxes in the 1960s was similar to what they’d made in Scratch.

Activity: “Judge a book”

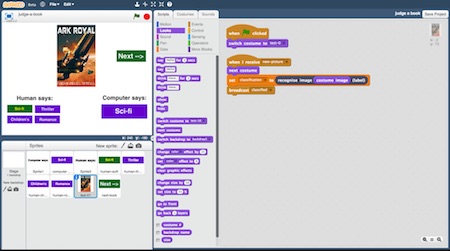

This activity was themed around the phrase “judge a book by it’s cover”. They trained an image classifier to recognise the type of book (e.g. children’s or food & drink) by a picture of it’s cover.

They made a game in Scratch that predicted the genre of a book based on it’s cover, checking how it would answer compared with a person.

Aside from being another chance to build an image recognition system, this also introduced the idea of measuring the effectiveness of a machine learning system by comparing its performance against the performance of a person at the same task, and the reason we test using examples not included in training in holdout validation.

Activity: “Tourist Info”

In this activity, they made a tourist info app. They trained a machine learning model to recommend attractions that someone on holiday should visit, based on their interests. It was a chance to show how we use machine learning for recommendations.

I then got them to introduce a new holiday attraction and intentionally bias their training towards it. They gave it a lot more training examples than for the other attractions in their app, even removing training data from the other attractions to make sure that their model ended up preferring this new attraction.

This was a chance to talk about training bias and the AI ethics questions it introduces. After seeing for themselves the impact that this had on how their tourist info app behaved, we talked about whether this was fair. We talked about whether it would’ve been less unfair if they’d accidentally biased the app towards the one attraction, rather than doing it on purpose. And what they thought the responsibilities that people training machine learning systems should have.

We talked about whether this would be more or less fair if the app was making recommendations to doctors about medicines to use, rather than to holiday makers about places to visit.

Overall

We packed in a lot for an afternoon, but I was pleased to see how much they all learned.

Keeping all of the kids going, particularly as they were all doing different activities at different speeds, was tiring. Plus it wasn’t without hiccups… the kids did find a couple of small bugs which I’ll fix this week.

None of their passwords worked at first for some reason I still haven’t worked out, so I had to frantically reset them all while they waited.

Worse, it turns out that imgur (that I used to host the training images for Rock, Paper, Scissors) has an undocumented rate limiting if it thinks you are uploading images too fast, which blocked half the kids from being able to finish their training for that one activity.

But these glitches aside, I think the day worked. I hope they enjoyed it and I’m sure they learned a lot.

This is the new revolution in the field of education. Kids are the future of the world and in order to make a big change, we should encourage the students towards machine learning.