This is IBM Event Automation : a new product we released last month to help our clients create event driven solutions.

I’ve written a 200-word summary of what IBM Event Automation is, but in this post I wanted to dive a little bit deeper and show what it can do.

The foundation component is Event Streams, our event distribution component, based on Apache Kafka.

Events are everywhere nowadays.

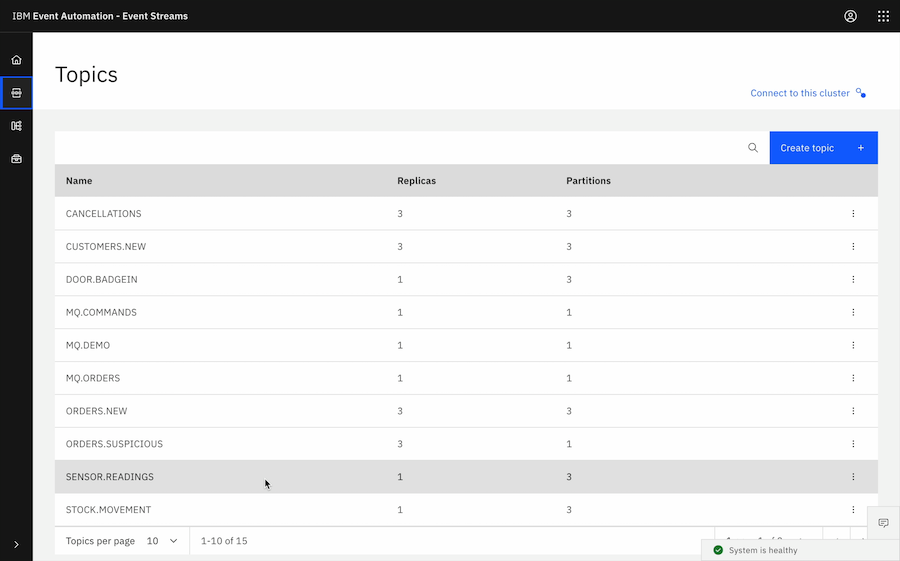

Some of your Kafka topics might be populated by creating a Kafka application – writing an application that produces events.

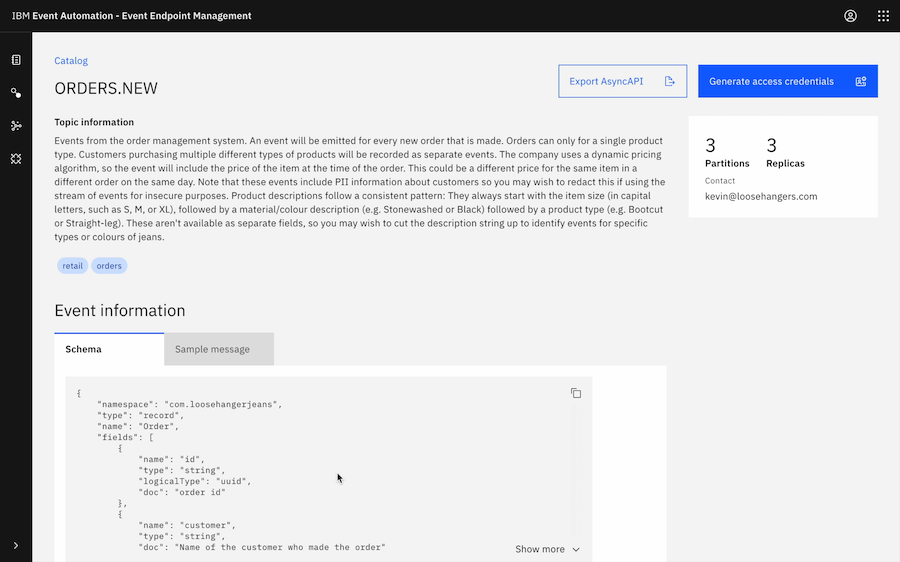

But increasingly, the adoption of Kafka as a defacto standard means all sorts of systems can emit events. When a new order is recorded in the order management system, maybe that can be emitted as an event. When a customer record is created in the customer management system, perhaps that could be emitted as an event.

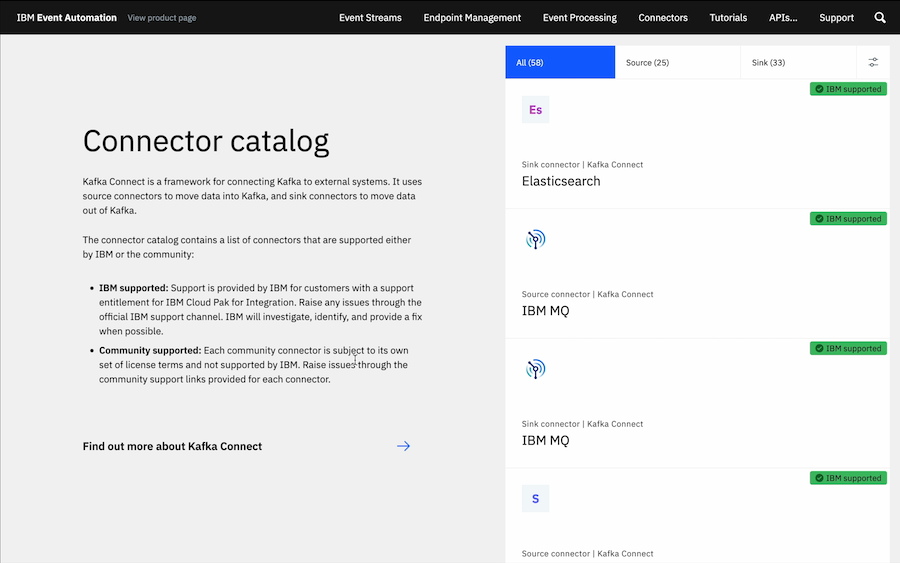

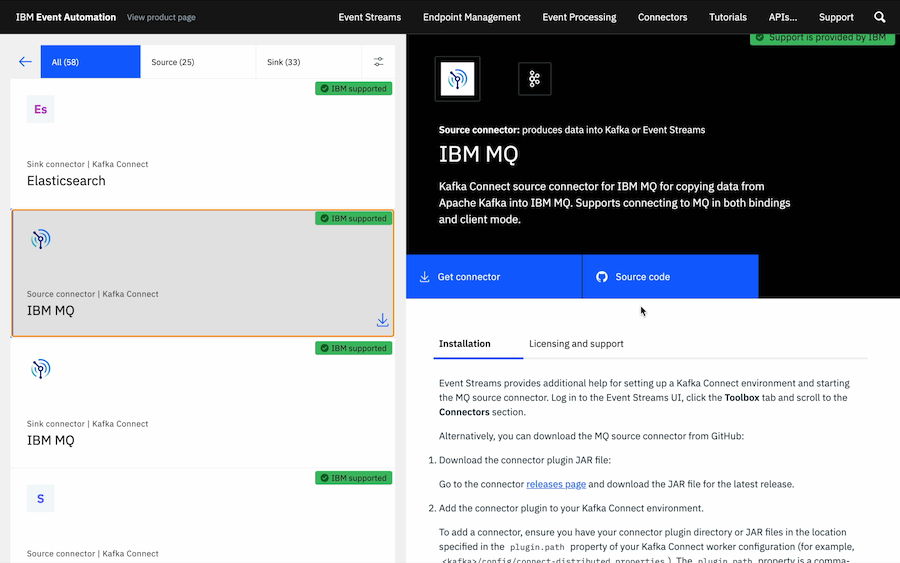

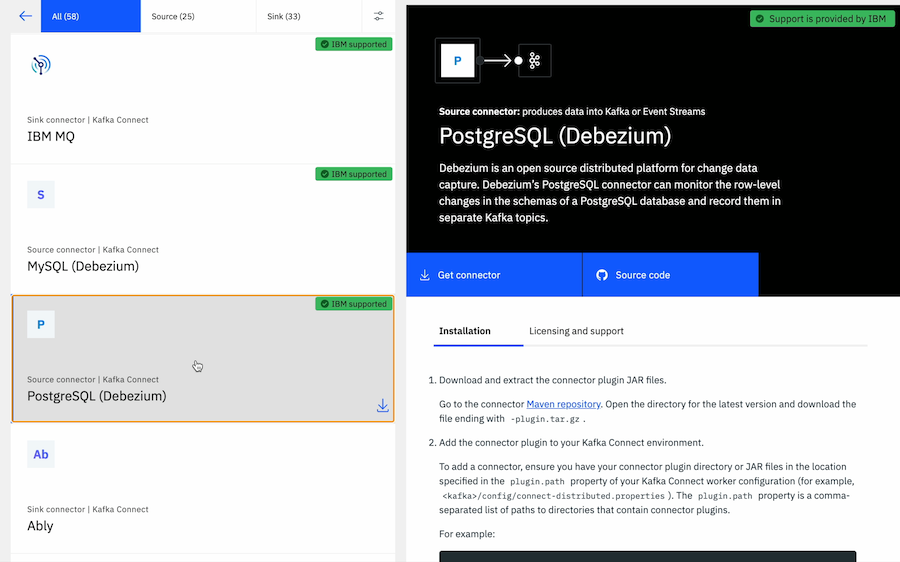

For systems that can’t natively emit Kafka events, IBM Event Automation provides a range of connectors that can capture events from key enterprise systems and surface them as events on Kafka topics.

More info:

Our MQ connectors means that existing messaging flows can be used as another source of events.

See the MQ connectors at:

Attaching a connector to capture change events from a database means any existing system built around a backend database can contribute to an event driven solution.

Collecting the events isn’t enough.

To be useful, businesses want to do something with them.

But processing events in isolation isn’t enough. You can’t get insight from an individual event without context.

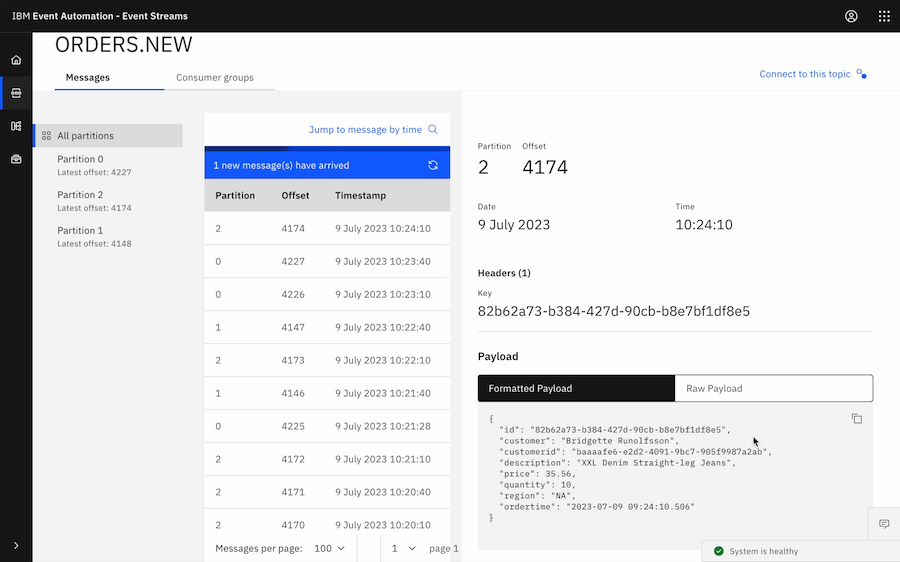

Take this event.

An event emitted to capture that an order was made. This tells me who ordered something. And what they ordered. How much they paid. When they ordered it.

Get an event generator to create test events like these:

But that isn’t enough.

Was this an order made by a customer immediately after creating an account?

If I just look at this order by itself, I can’t know.

Does this order represent an increasing trend in the number of product types being sold?

Without looking at the other orders in the same time window, I can’t know.

Is this order potentially suspicious – part of a series of orders and other actions taken to try to manipulate and take advantage of a dynamic pricing algorithm?

For all of these insights, context is key.

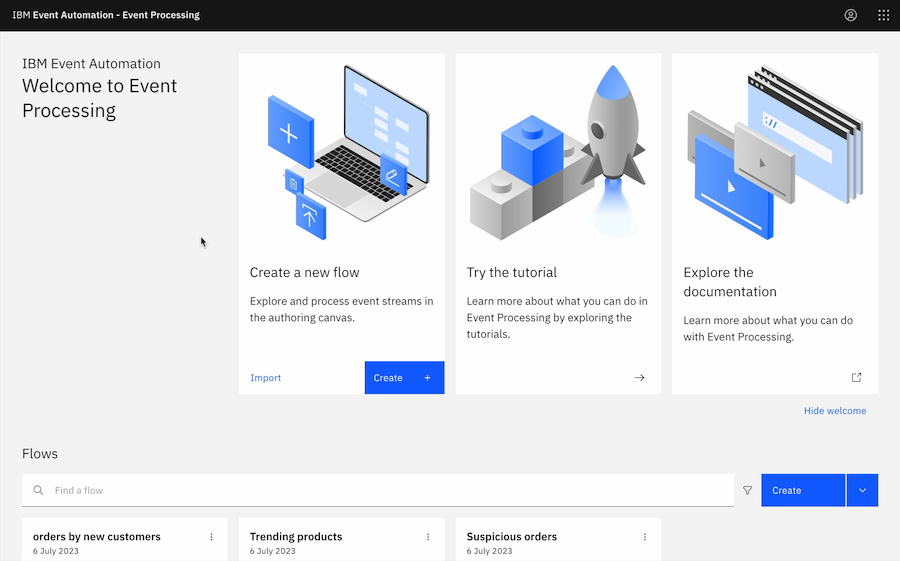

For this, IBM Event Automation includes Event Processing – a low-code tool for creating event processing flows that lets non-technical users get insight from these streams of events with the context of other events that occurred before and after them, correlating across multiple disparate streams of events.

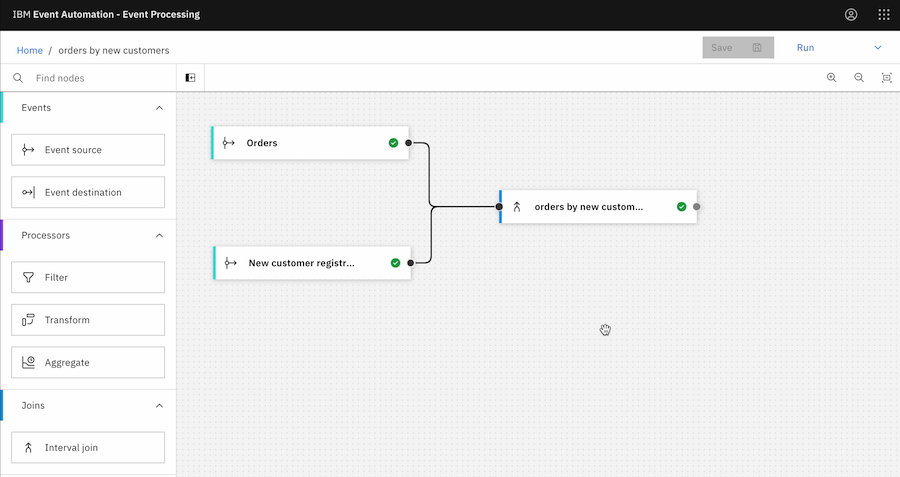

For example – was that order made by a customer immediately after creating an account?

By dragging on a stream of order events, and a stream of new customer registration events, and wiring them together, I can easily identify the orders by new customers as they occur.

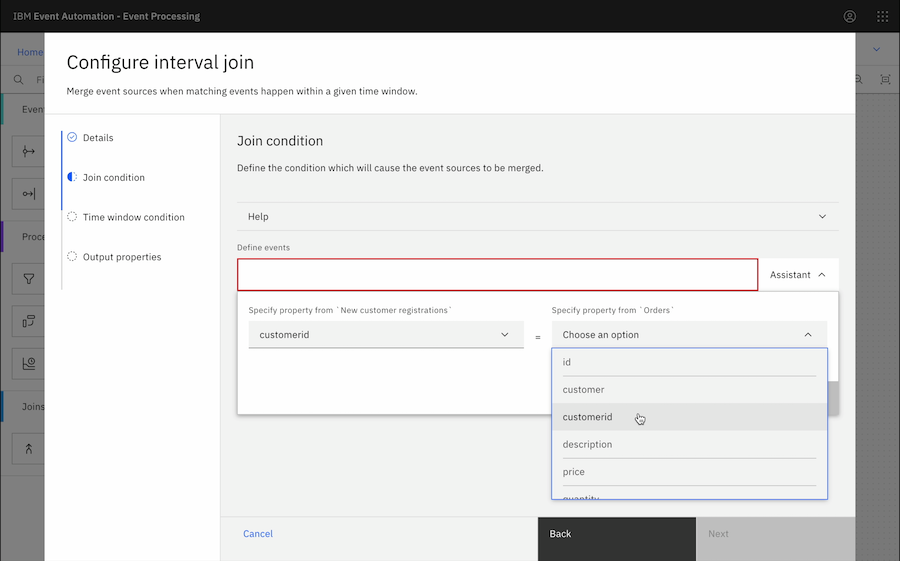

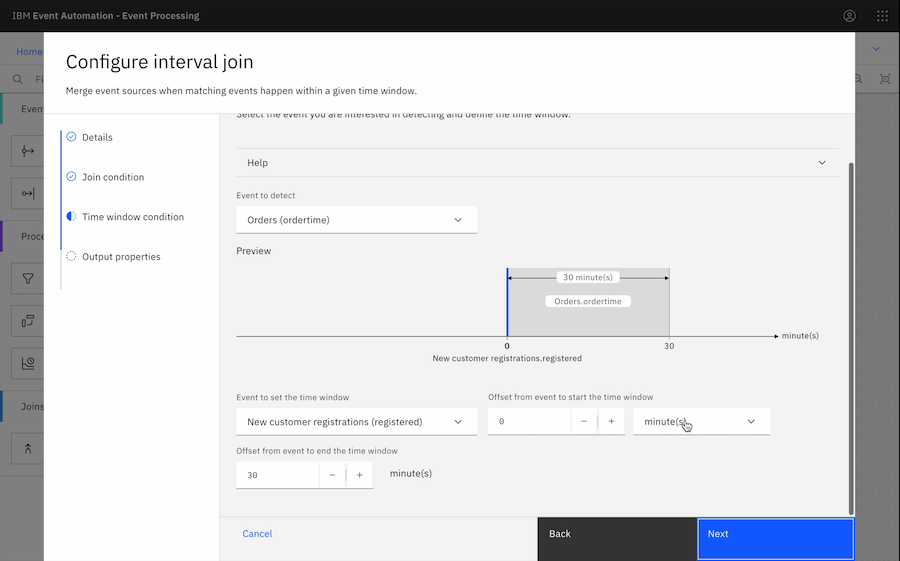

Assistants guide the configuration of each of these nodes – for example, guiding you to specify which properties can be found in common in events from different streams of events, and can be used to correlate the events.

Try creating event processing nodes for yourself:

Or a visual editor for defining the time window to use when joining streams of events.

I can try out my flow from the authoring tool, and see the results here as I configure my flow.

With just a few nodes I’ve maybe made my first steps towards creating a new customer loyalty campaign, a responsive solution that enables me to reach out and contact a new customer after their first order in a delightful, context-sensitive way.

Or was that order part of a trend?

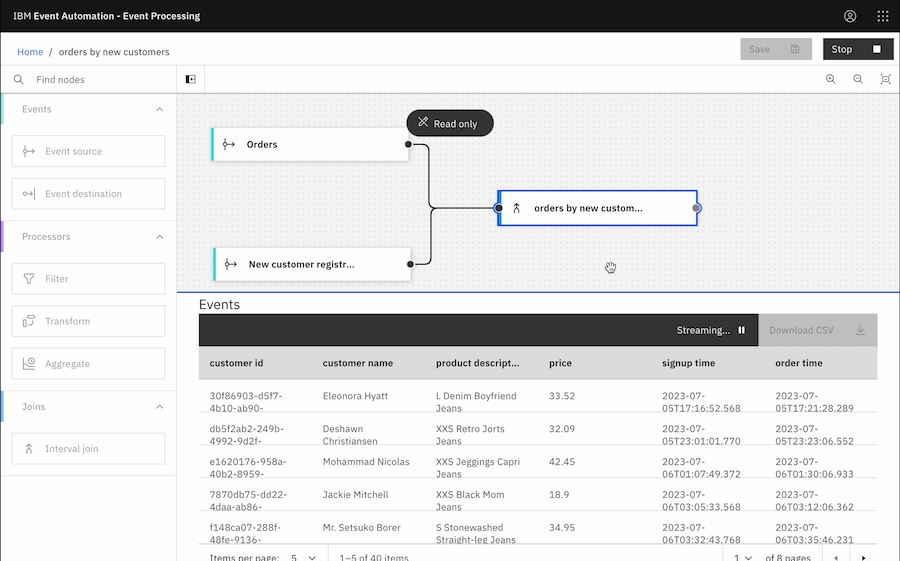

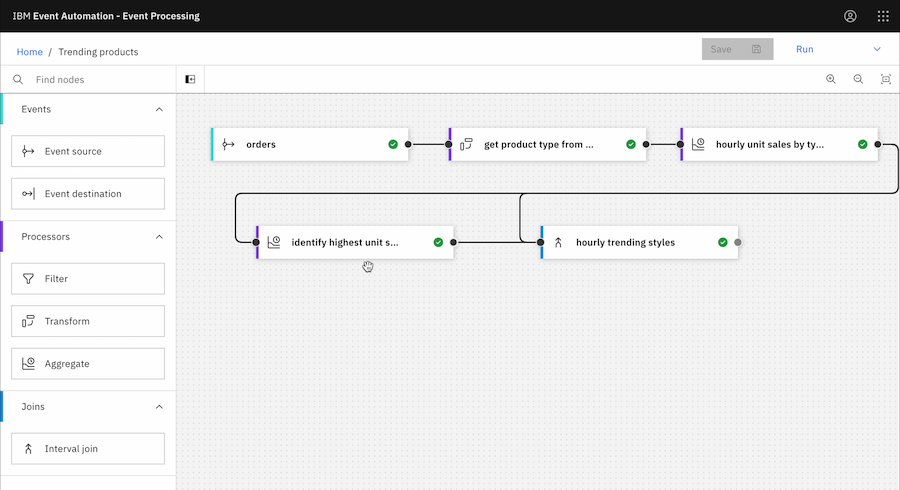

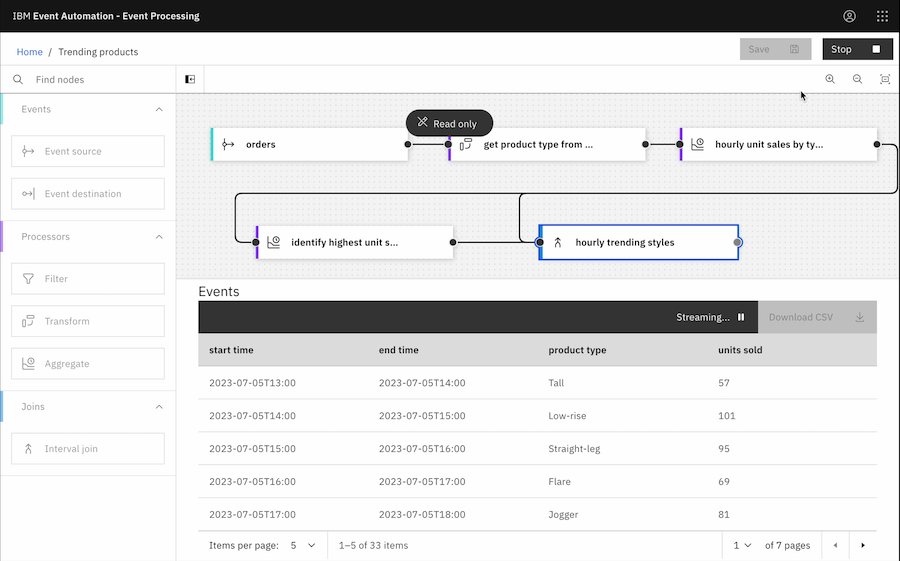

By dragging on a stream of order events, picking out the type of product from the product description, and doing a time-based aggregation – tracking the number of units sold of each type within each hour window – and then identifying which is the highest… by wiring these simple processing steps together, I can identify what product type has sold the most units in each hour.

I can test this out as I refine the flow.

Try creating this event processing flow for yourself:

Maybe this is the start of driving a new dynamic section of my website for my online retail channel – highlighting the current trending product types, to help drive additional sales for the current hot items.

Or was that order possibly suspicious?

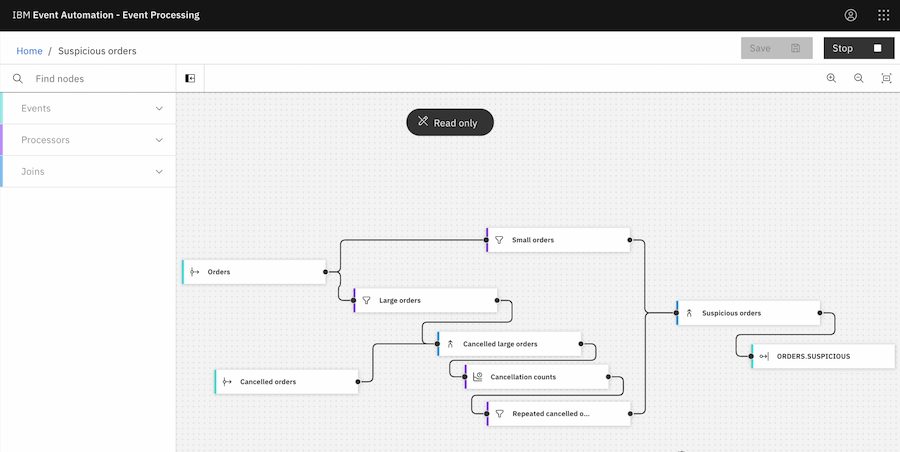

I can create a flow that looks for patterns of behaviour that are possibly suspicious. Say I have a dynamic pricing algorithm that responds to stock levels – so maybe I suspect people are making repeated large orders to influence the price (and later cancelling them) before they make a small order of what they actually want.

Try creating this event processing flow for yourself:

I want to examine orders in the context of other orders of the same item, and other actions, like order cancellations.

These kinds of real-time processing and analysis have traditionally been complex, requiring dedicated skills and complex technologies and tools. But as you’ve seen, we’re putting the ability to extract insight from events and in the hands of the non-technical business user.

Even these few simple flows they could be used to drive value, but it’s worth quickly going into how that could be done.

This is where Event Automation again benefits from the ubiquity and openness of Apache Kafka as a foundation. The results of the event processing flows can be emitted as new streams of events of Kafka topics, which can be used to trigger notifications.

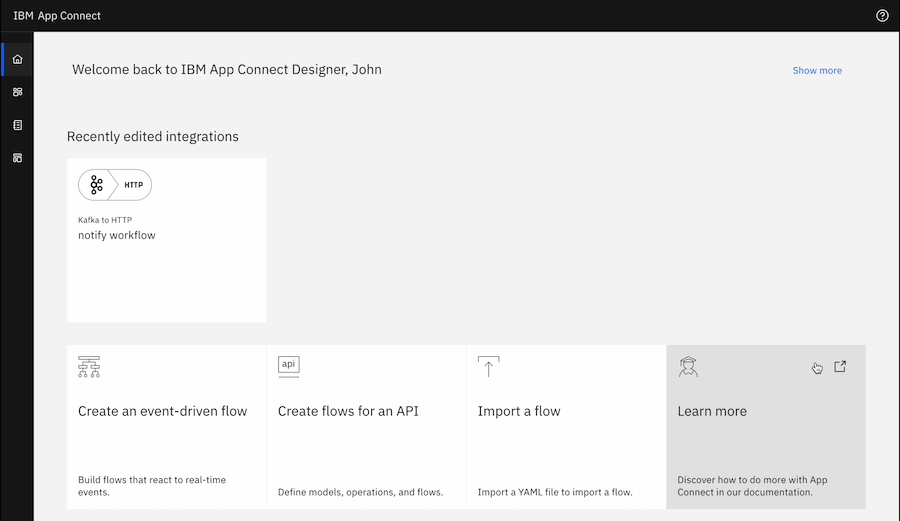

For example, something like IBM App Connect use these output topics to trigger push notifications for the huge range of target systems it supports.

Try this for yourself:

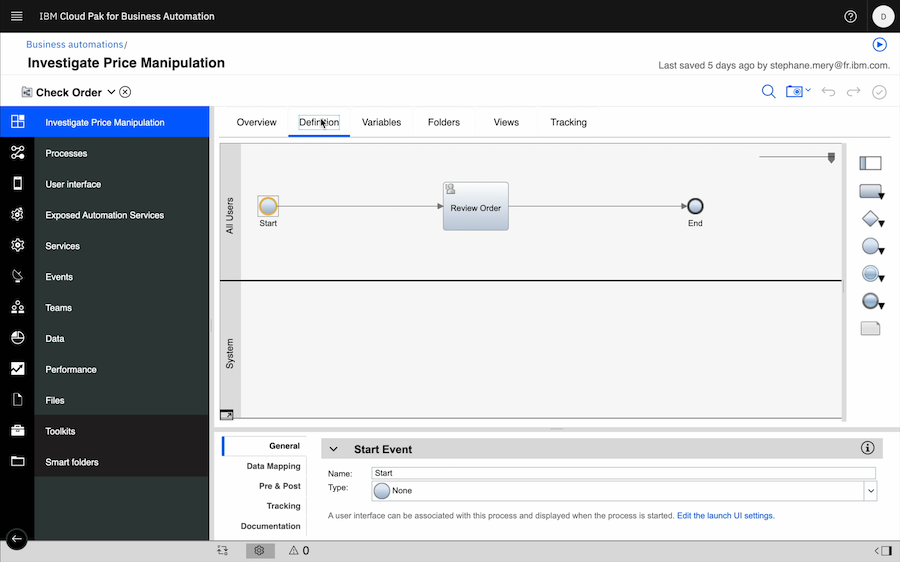

We can use it to trigger automations and business workflows.

That suspicious orders flow could be used to invoke a workflow for someone to investigate that potentially suspicious order in more detail – driving an immediate response and allowing actions to be taken as the order is made, instead of waiting for an end of week report.

Finally, I want to introduce the third component of Event Automation.

I started by highlighting how events come from everywhere. Dedicated Kafka apps, a huge and growing range of tools and systems that can emit events, and connectors that capture events from dozens of other backend systems, platforms and infrastructure.

We’re enabling that world – of a proliferation of events coming from a massive range of disparate systems.

And the value of Event Processing only increases as you bring in more event sources – letting you combine, and correlate events from many disparate sources.

So to help with that, we have Event Endpoint Management.

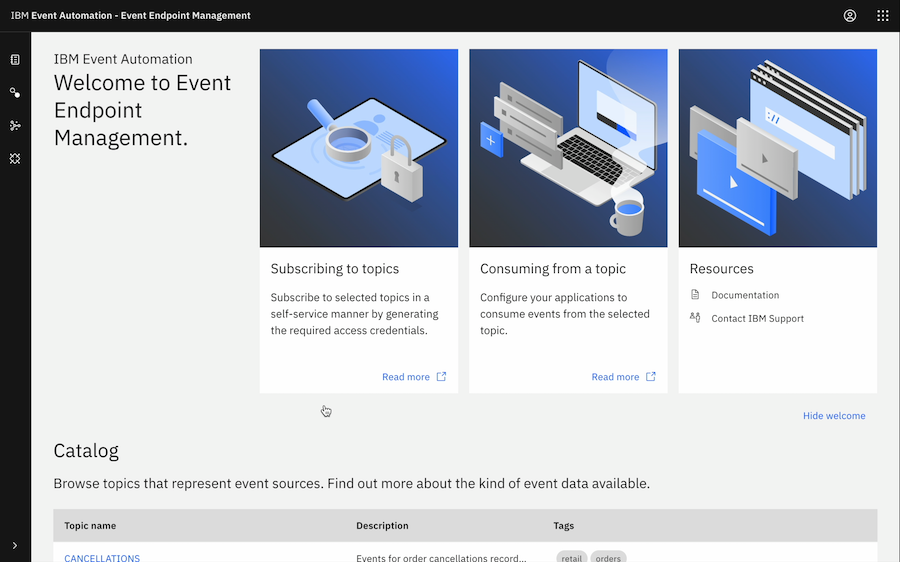

With Event Endpoint Management, businesses can create a self-service catalog for all of their streams of events.

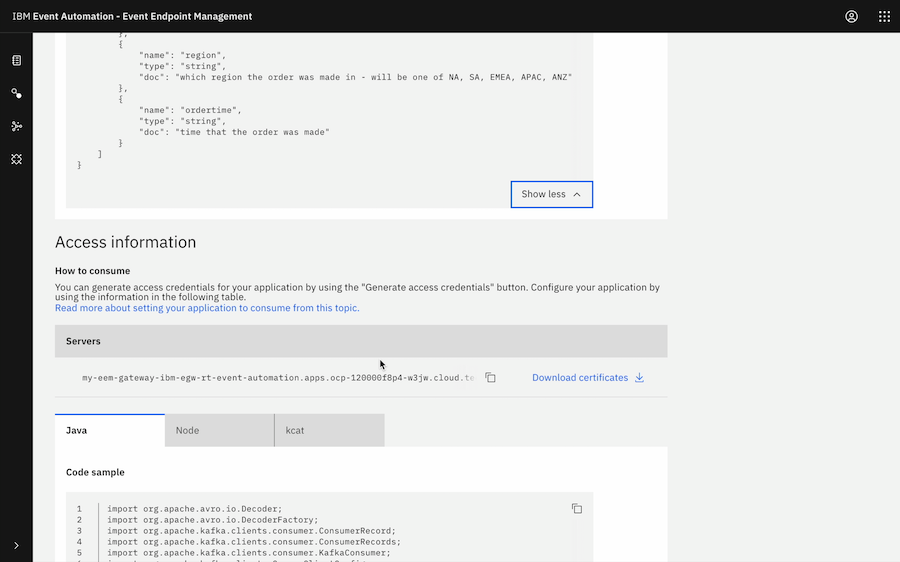

The starting point for all the above event processing flows was discovering that those streams of events existed in the Catalog, seeing the documentation for where the events came from and the schema for the data.

Getting the connection info for them. Creating access credentials.

The end-to-end for any of those projects can be just minutes – from finding the topics, to starting to bring them together and process them in Event Processing.

IBM Event Automation. Enabling businesses to accelerate your event-driven projects, with three components for building an event-driven architecture and rapidly getting value from the events in their existing systems.

For more information…:

- Check out our product page

- See our documentation

- Try our guided tutorial for yourself

- Request a demo

- or just ask me!

Tags: apachekafka, flink, ibmeventstreams, kafka