I gave a talk at Current yesterday about how to embed a tiny language model inside your Flink SQL pipeline.

I used a fun mix of demos to show what I think are the main approaches available for using generative AI with Kafka events from a Flink SQL job. Some demos were definitely more sensible than others!

These are the slides I used, and what I’d planned to say.

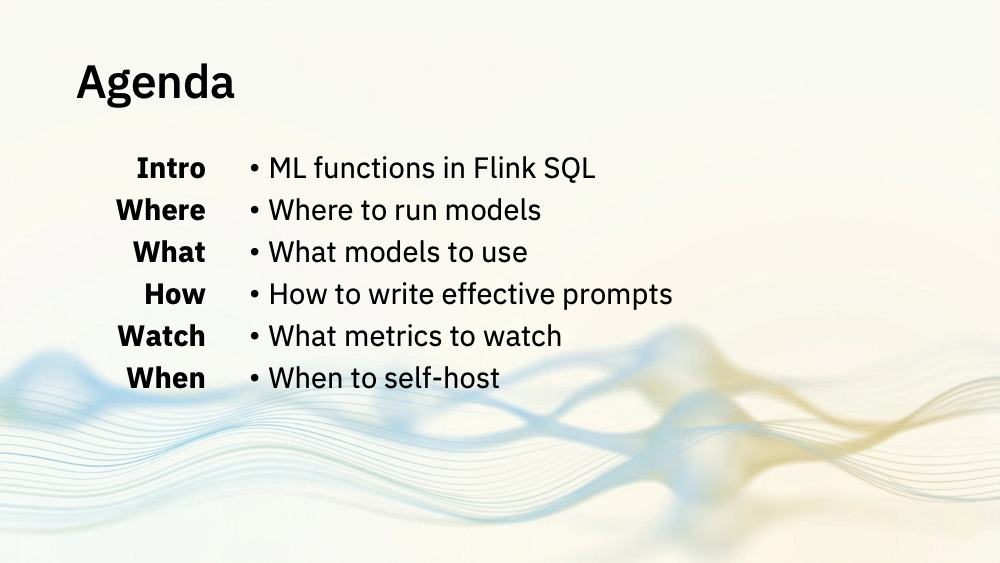

In this session, I’ll be talking about your options for running language models for Flink SQL jobs.

I’ll cover:

- your options for where you run them, in relation to Flink

- what sorts of choices you have for the models you run

- how to use them – the sorts of prompts and settings we’d want for Flink

- how to keep an eye on it that it’s working well

- and finally, some thoughts on when it’s a good idea to do any of this